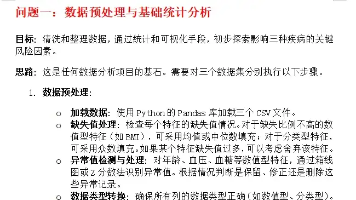

llamaindex 连接 ollama

2、检查ollama服务是否启动。llama_index 安装。

·

ollama 服务启动

1、ollama安装

2、检查ollama服务是否启动

sudo lsof -i :11434

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

ollama 1741220 ollama 3u IPv4 36781837 0t0 TCP localhost:11434 (LISTEN)

第一个程序

llama_index 安装

pip install llama_index

# first_llamaindex.py

import asyncio

from llama_index.core.agent.workflow import FunctionAgent

from llama_index.llms.ollama import Ollama

# Create an agent workflow with our calculator tool

agent = FunctionAgent(

llm=Ollama(model="qwen2.5:0.5b", request_timeout=360.0),

system_prompt="You are a helpful assistant.",

)

async def main():

# Run the agent

response = await agent.run("who the hell you are")

print(str(response))

# Run the agent

if __name__ == "__main__":

asyncio.run(main())

python first_llamaindex.py

I am an artificial intelligence language model developed by Alibaba Cloud. I am called Qwen, and I was created specifically to assist with various tasks such as data analysis, text generation, and more. Thank you for using me! If you have any questions or need assistance in the future, feel free to ask.

with tool测试

# first_llamaindex_tool.py

import asyncio

from llama_index.core.agent.workflow import FunctionAgent

from llama_index.llms.ollama import Ollama

# Define a simple calculator tool

def multiply(a: float, b: float) -> float:

"""Useful for multiplying two numbers."""

print("using tool.......")

return a * b

# Create an agent workflow with our calculator tool

agent = FunctionAgent(

tools=[multiply],

llm=Ollama(model="qwen2.5:0.5b", request_timeout=360.0),

system_prompt="You are a helpful assistant that can multiply two numbers.",

)

async def main():

# Run the agent

response = await agent.run("What is 1234 * 4567?")

print(str(response))

# Run the agent

if __name__ == "__main__":

asyncio.run(main())

python first_llamaindex_tool.py

using tool.......

The product of 1234 and 4567 is 56,356,780.

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)